Redshift wlm1/9/2024

We can check the memory allocation of our queues with the statement: Queue has more memory and lower concurrency level so each query has more power to finish the job.

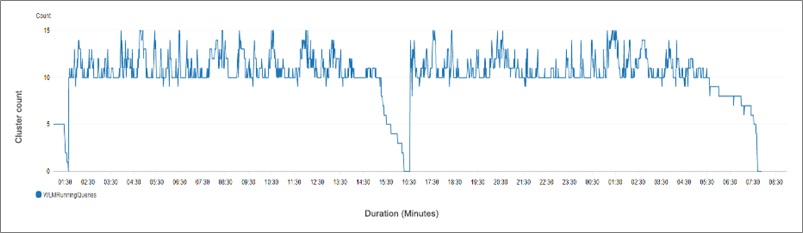

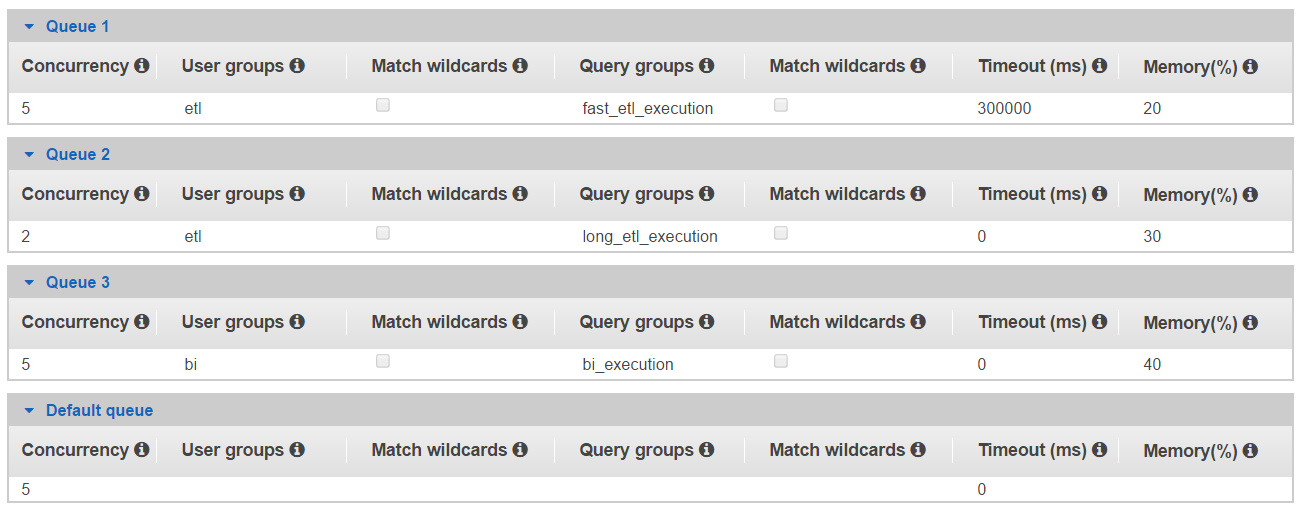

) will cancel the execution in that queue and route it to the Job, if it takes more than 2 minutes (3,000,000 milliseconds), the timeout parameter of the first defined queue of the user ( it is important to define etl and bi user groups beforehand, or you will have to restart your redshift cluster as these parameters are static.Ĭreate group etl with user etl_execution in this configuration, ad-hoc queries are handled by the default queue. it allows dynamic memory management when needed, we will look at some examples in the tips section.Īn example of a setup of the wlm configuration that handles a solid dwh/bi configuration looks something like this: The following wlm properties are dynamic:Īs mentioned above, the user can change dynamic properties without restarting the redshift cluster.

With defined queue parameter a dynamic execution parameter can be set for specific queries that impact their performance. if you change any of the dynamic properties, you don’t need to reboot your cluster for the changes to take effect unlike the change of the static properties. The wlm configuration properties are either dynamic or static. we want to make sure that the slow running queries are not blocking quickly running queries that execute in a manner of minutes or seconds.Īrriving at an optimal queue setting for the redshift cluster is a challenge and needs to take into account the needs of the specific implementation of user requirements. optimal execution of these queries necessitates a balanced structure of execution queues configurations dedicated to different query size and/or priority. Unlike transactional systems which have queries of uniform size and execution cost, data warehouse queries vary greatly in execution cost, time and result-set. Now that we know what are the main points… let’s move to the challenges. note that the timeout is based on query execution time which doesn’t include time spent waiting in a queue. With this parameter, you specify the amount of time, in milliseconds, that the redshift waits for a query to execute before canceling the query. the rate for all the queues adds up to 100%. You have the option of changing the percentage of memory assigned to each queue by setting wlm memory percent parameter. users can assign queries to a particular queue on the fly using this label. Specifies the number of queries that run concurrently within a particular queue.Įxecuting a query by a member of a user group runs the query inside the queue assigned to that user group. Smart use of queues parameters allows users to optimize time and execution cost of a query. for user-defined queues besides parameters listed in the default queues, you can change user groups parameters and query groups parameters. any queries that are not routed to other queues run in the default queue.īesides the default queue, you can add other user-defined queues. for default queues, you can change the concurrency, timeout and memory allocation. the default queue comes with the default setting of concurrency level of 5. this queue cannot be configured and can only process one query at a time.Įvery redshift cluster has a default queue. The super user queue is reserved for running commands related to the system, troubleshooting or for some emergency manual operations. we can also use it to define the parameters of existing default queues.īy default, amazon redshift has three queues types: for super users, default queue, and user-defined queues. we use redshifts workload management console to define new user-defined queues and to define or modify their parameters.

in this article, you will learn the challenges and some best practices on how to modify query queues and execution of queries to maintain an optimized query runtime.īefore we go into the challenges, let’s start with discussing key components of redshift.Īmazon redshift workload manager is a tool for managing user-defined query queues in a flexible manner. usually, the hangups could be mitigated in advance with a good redshift query queues setup.Īmazon redshift has implemented mechanism with which we can modify the queues to our advantage. when users run queries in amazon redshift, the queries are routed to query queues. Setting up a redshift cluster that hangs on some number of query executions is always a hassle.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed